A site visitor brings their own quirks, moods, and tiny habits. Someone scrolls with a lazy weekend vibe, someone else taps fast like their coffee’s getting cold. These small actions leave clues that shape the whole story of a visit. AI tracking reads these signals nonstop and helps sites offer smoother actions without realising it. This stuff runs in the background, yet it shapes how folks move.

Many sites still run on guesswork. That leads to clunky flows, random drop-offs, or people bailing from forms like they saw a ghost. AI tracking pulls hints from scrolling patterns, micro-pauses, taps, form edits, and jittery cursor moves. When a site reacts to these hints, the page starts feeling more natural to the visitor.

A few real stats show how sensitive user behaviour can be:

- 62% of shoppers that struggle to complete purchases abandon their carts

Source: https://www.retaildive.com/news/study-62-of-shoppers-that-struggle-to-complete-purchases-abandon-their-ca/586749/ - 88% of users avoid returning after a bad site visit

Source: https://changetify.com/the-importance-of-user-experience-why-88-of-website-users-dont-return-after-a-bad-experience/

These numbers kinda slap hard, but they point toward smart fixes.

This section explains how AI tracking reads those signals and helps sites offer better paths for users.

Key Takeaway:

- AI tracking studies how people move, pause, and interact with a page.

- These signals show what feels confusing, what grabs interest, and where trust feels weak.

- Teams use this info to fix layouts, adjust CTAs, shorten steps, and make each visit feel lighter.

- Stronger clarity brings higher comfort for users and leads to better actions on key pages.

- This approach gives websites a steady way to improve both experience and conversions without guesswork.

Key role of AI signals in user journey shifts

AI tracking keeps watching user behaviour across the journey, picking up tiny patterns that classic analytics miss. People don’t always move in straight lines. They drift, pause, skim, or get distracted. AI tools catch these habits and flag what needs fixing. The whole thing feels like someone quietly translating user body language.

Here are the types of signals AI tools usually pick up:

- Scroll pace

- Unexpected stops

- Cursor shake or jitter

- Hover delays

- Rage clicks

- Repeated form edits

- Return visits to the same element

These signals help decode the subtle problems hiding in plain sight.

How data patterns guide experience updates

Patterns show up when many users repeat the same move. AI highlights which parts users skim too fast or stare at for too long. These patterns guide site teams to:

- Shrink heavy content blocks

- Add clarity notes

- Reorder sections

- Improve CTA visibility

- Remove distractions

Below is a simple table showing what patterns often mean:

| Behaviour Pattern | What It Suggests | Helpful Fix |

|---|---|---|

| Fast scroll drop | Section feels boring | Rewrite, add visuals |

| Long pause on small text | Confusion | Add clarity line |

| Hover near CTA but no click | Doubt | Add tiny reassurance |

| Repeated jumps back | Unclear info | Reorder content |

These fixes are tiny but strong.

How behaviour cues shape on-page changes

When users get confused, their actions change. AI catches cues like:

- Hovering near buttons with no action

- Repeated returning to the same feature

- Form fields being cleared and retyped

- Cursor freeze before pricing

These cues guide on-page adjustments. Teams shift layout, tighten copy, add tooltips, or move CTAs where they feel natural. Little adjustments move the whole flow forward.

How session insights show friction points

Session recordings show the messy side of user behaviour. AI groups this chaos into clear friction maps. Instead of guessing, teams see:

- Where users rage tap

- Where scroll paths break

- Where visitors look lost

- Which elements feel too small

- Where people stall on forms

A friction map makes it obvious where users struggle, so teams don’t waste days testing random fixes.

Why AI tracking lifts overall site actions

Websites often hold unintentional clutter, and users trip over it without noticing why. AI tracking helps untangle this clutter by showing how people really behave. People don’t surf with perfect logic. They skim, freeze, jump around, get distracted. AI tracking shows how to shape the flow around their natural rhythm.

AI improves site actions because it reveals the root signals behind behaviour instead of surface metrics.

Signals that show content relevance

Content only works when people react to it. AI flags relevance through patterns such as:

- Slow, steady scrolls

- Longer pause time

- Cursor lingering

- Multiple return visits to the same point

- Users sharing or bookmarking sections

These behaviours hint that the content hits user needs. Content teams can build more of the good stuff and trim the dull parts.

Indicators showing user hesitation

User hesitation looks subtle, but AI sees it clearly.

Typical hesitation signals:

- Hovering near pricing text

- Cursor freeze on form labels

- Jumping to FAQ before making a decision

- Re-reading the same sentence

- Quick backward scrolls

These signals highlight where users feel unsure. Teams reduce hesitation by adding clarity notes, trust badges, micro-messages, or shorter explanations.

Metrics that point to trust building gaps

Trust gaps show up when users move nervously through high-stakes sections.

Common trust signals include:

- Fast jumps to policy pages

- Repeated visits to reviews

- Quick exits after form reveal

- Heavy scanning of product details

- Long pause on disclaimers

A table summarises these signals:

| Trust Signal | Meaning | Helpful Response |

|---|---|---|

| Heavy FAQ usage | Doubts | Add clarity above fold |

| Quick exit after pricing | Fear of risk | Add benefits nearby |

| Too much scanning | Low confidence | Add review snippet |

| Repeated scrolls | Missing detail | Add short bullet list |

Trust wins half the battle for conversions.

Ways AI tracking improves experience flow

A smooth user flow feels like a friendly guide, not a pushy salesperson. AI tracking makes this easier because it reads how people naturally behave. Every user has a browsing vibe. Some take it slow, some zip through pages, some skim like they’re speed-reading a menu at a loud cafe. AI learns these patterns and helps shape a flow that feels comfortable.

Predictive cues for better content timing

AI systems guess what users might need next based on earlier signals.

These predictive hints appear when tools notice:

- A return to earlier sections

- Slow reading on a technical part

- Skips around certain content

- Re-reading pricing details

Teams can then place:

- Relevant FAQs

- Pop-up help

- CTA nudges

- Short visual breaks

This timing prevents users from drifting off.

Heat signals that show user attention

Heatmaps show where attention burns hottest. They highlight:

- High-focus regions

- Cold zones with no interest

- Areas users miss completely

- Unexpected hotspots

- Dead areas that look clickable

This helps teams refine:

- CTA spots

- Content layout

- Button spacing

- Image placement

- Key message order

It’s like knowing where shoppers stop inside a store, without following them around like some weird detective.

Input hints that reduce decision stress

Forms and inputs often turn calm users into frustrated ones. AI flags stress points like:

- Field corrections

- Empty mandatory fields

- Long hover time

- Clicking outside the field repeatedly

- Switching tabs mid-form

Fixing these reduces mental load.

Helpful adjustments include:

- Shorter forms

- Inline hints

- Autofill

- Fewer distractions

- Cleaner layout

Users finish tasks with less friction and fewer “ugh why is this form so annoying” moments.

How AI tracking helps to Improve User Experience and Conversions

AI tracking gives conversion steps clearer direction. Users leave hints in every move. Some bounce from a CTA like it’s too hot to touch, some scroll slow as if they’re thinking too much, some re-check a section three times like they lost their car keys. These small actions shape strong insights. When a site matches these habits with clean touchpoints, conversions start rising naturally.

AI doesn’t “force” users down a funnel. It just reads behaviour and shows what the flow should feel like. Many users drop off from confusion, hesitation, or distrust, not because the offer is bad. AI tracking digs into those subtle cues and keeps nudging site owners toward better decisions.

Below are action parts where AI gives conversions a serious lift.

Behaviour signs for stronger CTAs

CTAs fall flat when users don’t vibe with the timing or wording. AI tracks:

- Hover delay before clicking

- Cursor wobbles around buttons

- Quick scrolls past key CTAs

- Rage clicks on dead elements

- Click attempts on non-clickable zones

This list shows small frustrations users usually never voice. Tweaking CTA placement, tone, and spacing becomes easier with these signals.

Interest signals that guide funnel steps

Funnel steps should feel natural. AI spots:

- Repeated product comparisons

- Scrolling back to FAQs

- Hints of pricing anxiety

- Instant exit after form reveal

- Quick jumps to trust sections

These hints point toward weak spots in the funnel. Teams can rearrange steps so users don’t feel lost. Kind of like giving a map to someone who keeps missing turns.

Priority metrics for higher lead quality

Different users show different seriousness. AI tracks patterns like:

| User Action Signal | Meaning | Funnel Use |

|---|---|---|

| Returns to the same section | High interest | Show reassurance content |

| Long pause on a product detail | Strong intent | Offer micro-CTA near detail |

| Fast jump to pricing | Purchase curiosity | Show benefits beside pricing |

| Scroll repeats | Mixed clarity | Add cues or tooltips |

This table helps you see how signals push teams toward better decisions. Lead quality rises when you act on these behaviours instead of sticking to fixed scripts.

Steps to use AI tracking in your workflow

AI tracking supports decisions only when your workflow has structure. Many businesses collect signals but never translate them into meaningful actions. A smart workflow turns raw behaviour into clear fixes that help users move faster without feeling pressured.

Here’s how you slot AI tracking smoothly into your day-to-day flow.

AI tools don’t do magic on their own. They need consistent reviews, quick tests, and fast fixes. A team that reads behavioural signals every few days stays ahead, while a team that checks once a month gets stuck repeating old mistakes.

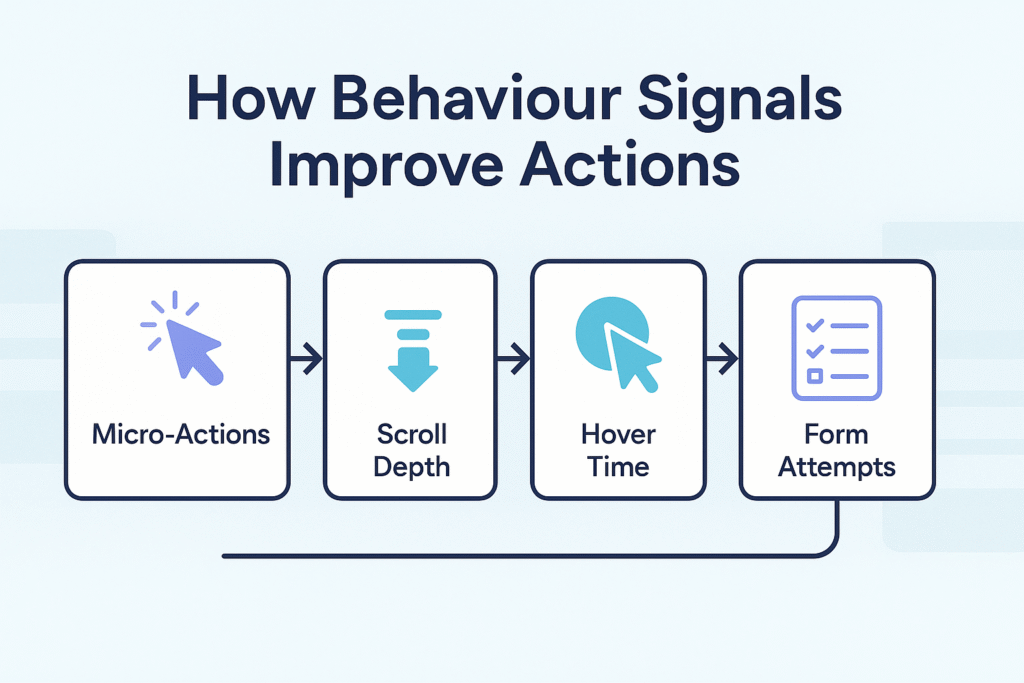

Picking the right intent-based metrics

Focus on metrics that reflect user desire, not vanity data.

Helpful intent metrics include:

- Scroll depth with pauses

- Hover time on CTAs

- Repeat visits to the same element

- Cursor hesitation around trust parts

- Form correction attempts

These show how users think during the journey. When the numbers shift, it points to a deeper behavioural change.

Aligning signals with user-focused goals

Your goal shouldn’t be traffic bragging. A better aim is: “help the user finish what they came for.”

To match signals with user goals, track:

- Where users hesitate

- Where they skip

- Where they show curiosity

- Where they drop

A simple alignment chart helps keep goals clear:

| Goal Type | User Signal | What To Adjust |

|---|---|---|

| Reduce doubt | Hovering near pricing | Add quick reassurance notes |

| Lower drop rate | Rage clicks | Fix UI breaks or dead spots |

| Increase action | Long pauses near CTA | Change timing or text |

| Improve clarity | Repeat scrolls | Reword sections or add visuals |

Running site improvements from insights

AI tracking only matters when you turn signals into actions.

A good improvement routine includes:

- Weekly signal checks

- Quick UX microtests

- Heatmap comparison reviews

- CTA placement experiments

- Form correction analytics

These tiny steps stack up like bricks. Over time, the structure becomes solid and pleasant for users.

Tools that support AI-driven tracking needs

AI tracking tools read behaviour at scale and mark patterns with high detail. Each tool has its own vibe. Some specialize in heat signals, some in session replays, some in funnel predictions. Teams often mix two or three so they don’t miss important insights.

Picking a tool without testing feels like wearing shoes two sizes off. You need fit and comfort. A quick trial helps you see what matches your site’s behaviour volume, budget, and workflow speed. Below are categories that usually cover most needs.

Tools that give event-based behaviour data

These tools read micro-events such as:

- Click delays

- Hover tension

- Scroll velocity

- Confused cursor movements

Popular options for event data:

| Tool Name | Core Use | Notes |

|---|---|---|

| Heap | Auto event tracking | Great for teams wanting less manual setup |

| Pendo | User path shaping | Good for SaaS journeys |

| Mixpanel | Behaviour funnels | Works well for product-heavy sites |

Tools with session-replay prediction help

These tools show real user journeys like a screen recording.

Useful for noticing rage taps, weird detours, or UI glitches.

Options:

Session playback feels like reading a person’s mind, but without stalking them at their desk.

Tools that offer funnel impact reporting

Funnel tracking tools highlight:

- Step leaks

- CTA pressure points

- Form hesitation patterns

- Trust-building gaps

Popular ones:

They provide clean diagrams that help teams act without drowning in raw numbers.

Common mistakes that reduce AI insights value

Even good data goes sideways when misunderstood. Many teams jump on trends or copy competitors. AI tracking shines when you match it with user behaviour on your own site. People shop, read, and decide differently on every page.

When mistakes repeat, insights lose meaning. Teams then blame tools, even though the problem sits in poor interpretation. Below are mistakes that drain value from AI insights.

1. Over-reliance on raw signal collections

Collecting data without context feels like reading random text messages from strangers. Raw signals need interpretation or they mislead the team. Always connect behaviour with:

- Device type

- Session time

- Entry point

- Past actions

- Content viewed

2. Misread cues from short user sessions

Short sessions don’t always mean disinterest. Some users know exactly what they want and click quickly. Misreading fast exits or short scrolls leads to wrong fixes. AI tools help separate rushed sessions from confused ones.

3. Lack of alignment with actual user needs

Teams often chase internal targets. Users just want value, comfort, and clarity. Whenever site changes ignore user behaviour, insights lose weight. Align fixes with what users actually did, not what you wanted them to do.

Future shifts shaping AI tracking outcomes

AI tracking keeps getting sharper. Behaviour analysis grows richer every year. Users change habits fast. They jump between apps, ads, pages, and devices like they’re flipping channels on a lazy weekend. AI adapts by reading deeper patterns.

Tomorrow’s AI tools will read subtle cues with more accuracy. The systems will guess intent earlier, making UX smoother for every type of visitor. That gives site owners a strong edge in conversions.

Better mapping of user interest triggers

Future models might read emotional signals from hesitation, scroll patterns, and mouse pressure. This can help teams show support elements at perfect timing.

Stronger models for session pattern study

New models will group users with similar browsing vibes. This grouping helps personalize site experiences without manual segmentation.

Fine-tuned intent detection for funnels

Funnels will adjust themselves. If users show confusion, the page will reorder or show better help blocks. The journey becomes lighter and less tiring.

Alos Read:

1. How Brands Can Check Their Presence in AI Answers?

2. How AI Tracking Tools Benefit Your SEO Workflow?

3. How to Choose the Right AI Tracking Tool?

4. Top AI Visibility Trackers for Brand Monitoring in AI Search

Conclusion:

AI tracking turns the messy noise of user actions into small clues that feel super handy for anyone running a site. People tap, pause, scroll weirdly, hesitate, backtrack, get picky, get excited. These tiny moves tell a story that old-school analytics kinda struggles to catch. A site that reads these signals has a better shot at giving users a smooth ride instead of a clunky maze. Feels like giving folks a comfy seat instead of a wobbly chair.

Users want pages that get them without begging for patience. When AI tracking points out the awkward bits, teams fix stuff quicker and with more accuracy. Pages start feeling lighter. Tasks don’t feel like homework. That’s when folks stay longer, click more, sign up, buy, or simply chill while exploring the content. A good flow speaks louder than any fancy slogan.

AI tracking won’t do the job alone. It grows sharper when you mix real curiosity with real user care. Read the signals often, adjust your pages like you’re tuning a guitar, and keep an eye on what people actually do on the screen. That rhythm keeps UX fresh and conversions steady.

Comments are closed